Zoos are not exactly where you expect to find advanced machine learning. Usually, it's the domain of silicon labs and software engineers. But at Hong Kong's Ocean Park, a quiet tech shift is happening. Zookeepers are now trading stopwatches and clipboards for neural networks.

The park is using custom computer vision to monitor its giant pandas and Sichuan golden snub-nosed monkeys. It is not just about counting heads or making sure nobody escaped. It is about reading micro-behaviors that the human eye misses.

If you think this is just a flashy tech gimmick, you are wrong. Tracking animal behavior manually is a massive pain. Traditionally, a researcher sits with a clipboard for an hour, twice a week, logging what an animal does. It's tedious. It's prone to human bias. And the moment the researcher walks away, the data stops. Animals do not just act when we are looking. They have rich, complex lives at 3:00 AM.

By switching to autonomous tracking, Ocean Park has moved to 24/7 observation. The data set isn't just slightly bigger. It is exponentially more accurate.

Moving Past Simple Detection

Most commercial computer vision systems are built for humans. They count bodies entering a subway or cars crossing an intersection. When applied to animals, older software basically just saw a "blob." It could tell you a panda was in the frame, but it couldn't tell you if that panda was eating, scratching, or pacing.

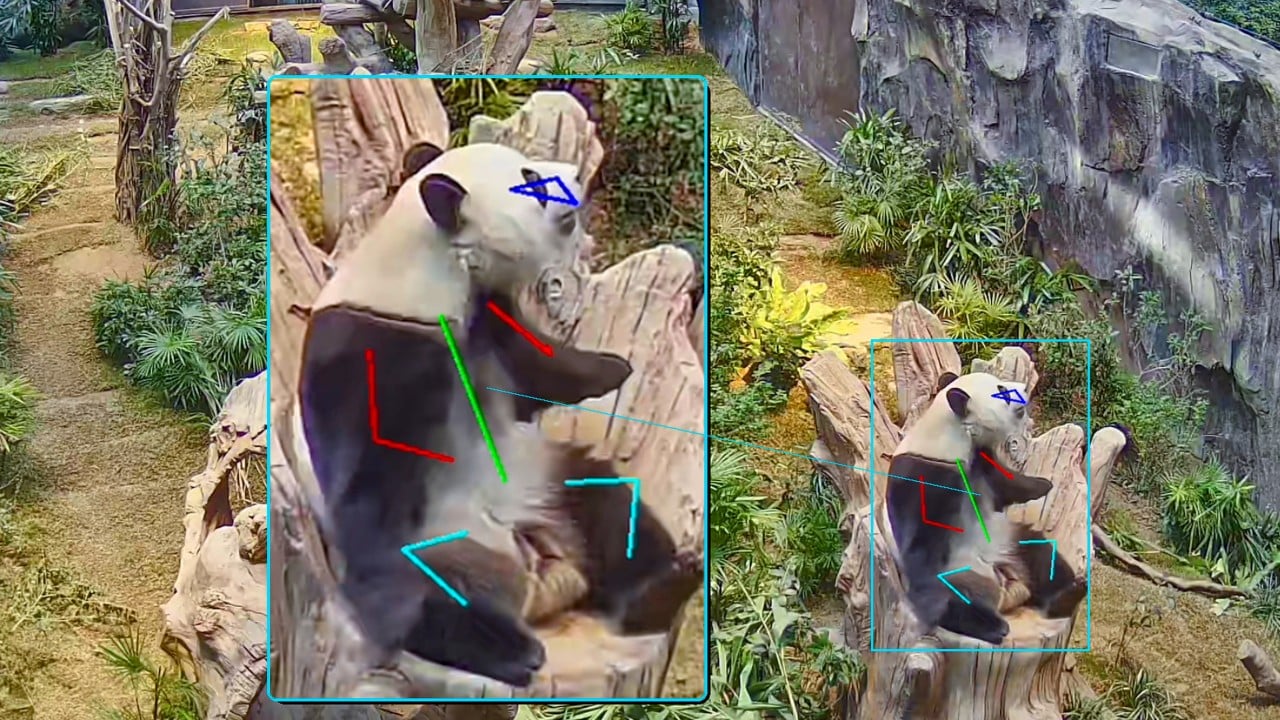

Ocean Park’s tech team adapted standard people-counting algorithms into a specialized skeletal detection system. The software maps out the anatomical structure of the animal. For a giant panda, it identifies the head, torso, and limbs.

Instead of just logging "panda is present," the system recognizes specific poses. It knows the difference between a panda sitting still and one walking. It can spot a panda raising its arms. That might seem like a random movement, but to a biologist, it is a precursor to a roll.

The system uses a three-tier architecture to process what it sees:

- The Base Layer: Individual identification. It recognizes which specific animal is in the frame with about 95% accuracy.

- The Intermediate Layer: Temporal neural networks. This layer tracks actions over time. It can annotate social grooming or play.

- The Top Layer: Predictive analytics. This layer connects behavior to biological cycles. The park reports it can predict breeding cycles with 87% accuracy. Considering how notoriously difficult panda breeding is, that number is a massive deal.

Tailoring the Habitat to Real Data

The real magic happens when you connect this data to daily animal care. Let's talk about enrichment.

Zookeepers use enrichment items—puzzles, ice blocks, new scents, climbing frames—to keep animals mentally stimulated. In the past, placing these items was a bit of an educated guessing game. You put a puzzle in the corner and hope the animal likes it.

Now, the AI generates spatial heatmaps of the enclosures. Caretakers can see exactly where giant pandas An An and Ke Ke, or the golden monkey family (adults Qi Qi and Le Le, and their daughter Little Red Bean), spend their time.

If the heatmap shows the pandas are hugging the left wall all day, the team doesn't just shrug. They can strategically place a bamboo puzzle or a climbing structure on the right side of the enclosure. It forces the animals to explore, move, and think. It fights off cage lethargy and mimics the physical exertion of the wild.

The Golden Monkey Social Map

For the Sichuan golden snub-nosed monkeys, the tech goes a step further. Monkeys are intensely social primates. Their health is tied to their family dynamics.

The system measures the physical distance between individual monkeys over time. If the young juvenile, Little Red Bean, is suddenly isolating herself from her parents, the system flags it. Is she sick? Is there a social rift? In a traditional setup, you might not notice that subtle drift until visible physical symptoms appear. The software acts as an early warning system.

Practical Takeaways for Wildlife Management

The setup at Ocean Park isn't just a fun case study. It is a blueprint for modern zoological philosophy. If you manage a facility, work in wildlife rehab, or just care about ethical animal management, there are clear lessons here.

1. Augment, Don't Replace, Human Keepers

The goal here isn't to fire zookeepers and let robots feed the monkeys. It's the opposite. It frees humans from mindless video review so they can do actual care. Instead of spending ten hours fast-forwarding through grainy night-vision footage, a biologist opens a dashboard, looks at the anomalies, and goes straight to the enclosure to help.

2. Use Heatmaps for Dynamic Enclosures

Static enclosures are boring for smart animals. Use movement data to redesign spaces dynamically. Rotate where food is hidden based on where the animal least visits.

3. Open the Data Vault

Ocean Park is taking its real-world animal datasets and sharing them with local schools. This is a brilliant move for community buy-in. If you are running a conservation program, find a way to let local students or university labs use your anonymized data for their STEM projects. It builds your talent pipeline and gets the community invested in your animals.

If you want to apply this kind of setup yourself, do not start by trying to build a custom neural network from scratch. Look at open-source pose estimation frameworks like DeepLabCut or SLEAP (Social LEAP Estimates Animal Poses). These are academic toolkits designed exactly for tracking animal body parts. You don't need a theme park budget to start running basic pose estimation on your own local wildlife footage. Set up a trail cam, run the footage through an open-source pipeline, and start mapping what is actually happening in your local ecosystem when the sun goes down.